Most enterprise AI pilots fail quietly. Not because the technology doesn't work, but because nobody defined what "working" means before the pilot started. A team gets access to an AI video tool, a few people experiment with it, and 30 days later someone asks: "So, was it worth it?" Nobody has an answer.

The difference between a pilot that leads to a production rollout and one that fizzles into a canceled subscription comes down to structure. Clear success metrics, the right people, well-chosen use cases, and a timeline with real checkpoints.

This guide walks through what a structured AI video pilot looks like — and what it looks like specifically when it's built into the product itself.

What an AI Video Pilot Actually Is

An AI video pilot is a time-boxed evaluation period — typically 30 days — where a selected group from your creative team uses an AI video platform on real projects. The goal isn't just to test whether the tool works technically. It's to answer a specific set of business questions: Does this reduce production time? Does the output quality meet our standards? Will the team actually use it?

A pilot is different from a proof of concept. A POC is technical validation: "Can this tool generate a 10-second clip from a text prompt?" A pilot goes further: "Can our team use this to produce content we actually ship, at a quality level our clients accept, faster or cheaper than our current process?"

That distinction matters because it changes what you measure and who needs to be involved.

Why Structure Is the Difference

The ad-hoc approach — someone signs up for a free trial and shows a few colleagues some outputs — is tempting because it requires no planning. It's also nearly useless for making a procurement decision.

Without structure, you end up with anecdotal feedback instead of data. "It's cool" and "it didn't work for my project" are not actionable inputs for a budget conversation. A structured pilot gives you:

Quantifiable adoption data. How many team members actually used the tool, how often, and for what types of work? If 12 out of 25 people are active after two weeks, that tells you something different than if 3 out of 25 are.

Quality benchmarks. Side-by-side comparisons of AI-generated output against your current production process, assessed by the people who actually make quality calls.

Time and cost comparisons. Concrete measurements of how long tasks take with the AI tool versus your existing workflow — and what that means for production costs at scale.

Stakeholder alignment. When leadership asks whether to commit budget, you have a report with numbers instead of opinions.

The Metrics That Matter

Before anyone logs in, agree in writing on what success looks like. Effective pilot metrics are specific and measurable:

- Adoption rate: What percentage of registered users are actively generating content by day 30? A reasonable threshold is 50% of registered users active.

- Generation volume: How many assets has the team produced? Track week-over-week. Are people using it more over time, or less?

- Quality acceptance rate: Of the content generated, what percentage passed your team's quality bar without significant manual rework?

- Time savings: For 3–5 representative tasks, how long did they take with the AI tool versus your current process?

Beyond the core metrics, watch for distribution. One power user generating 500 assets is a different signal than 15 users generating 30 each. The latter indicates actual workflow integration. The strongest possible signal: did any AI-generated content make it into a real deliverable — published, client-facing, or shipped internally?

Choosing the Right Use Cases

Don't try to test everything at once. Pick 2–3 specific use cases where AI video is most likely to deliver measurable value. Good pilot use cases share three traits: they happen frequently enough to generate meaningful data in 30 days, they currently cost enough time or money that improvement is noticeable, and they're representative of your broader content needs.

Strong candidates for creative teams:

- Social media video content (high volume, fast turnaround)

- Product demo or explainer clips (frequent, templatable)

- Concept visualization and storyboarding for client pitches (high creative value, traditionally time-intensive)

- Internal training or onboarding content (lower quality bar, good for learning the tool)

Avoid use cases that require capabilities the tool doesn't have yet, or that have such high quality requirements that AI-generated content would need extensive manual finishing. You want the pilot to demonstrate what's possible today.

Training Is the Biggest Predictor of Success

The single biggest factor in whether a pilot succeeds isn't the tool — it's training. Teams that receive structured onboarding in the first week adopt at significantly higher rates than those handed login credentials and a link to documentation.

A practical training structure for a 30-day pilot:

- Week 1: Kickoff covering the interface, basic generation workflows, and your selected use cases. 60–90 minutes, live, with screen sharing.

- Week 2: Follow-up on advanced features, prompting best practices, and questions from the first week of hands-on use.

- Weeks 3–4: Office hours and async support. Day-to-day questions handled internally; technical escalations go to the vendor.

If your platform vendor offers a dedicated Customer Success Manager for the pilot period, take it. The CSM knows the tool's capabilities and limitations better than anyone on your team will after a few days, and can prevent common pitfalls that waste pilot time.

This is where a lot of AI video vendors fall short. Enterprise teams consistently report that "thoughts and prayers" onboarding — a welcome email and a help center link — is the norm. It's also one of the clearest reasons pilots fail before they generate enough data to be useful.

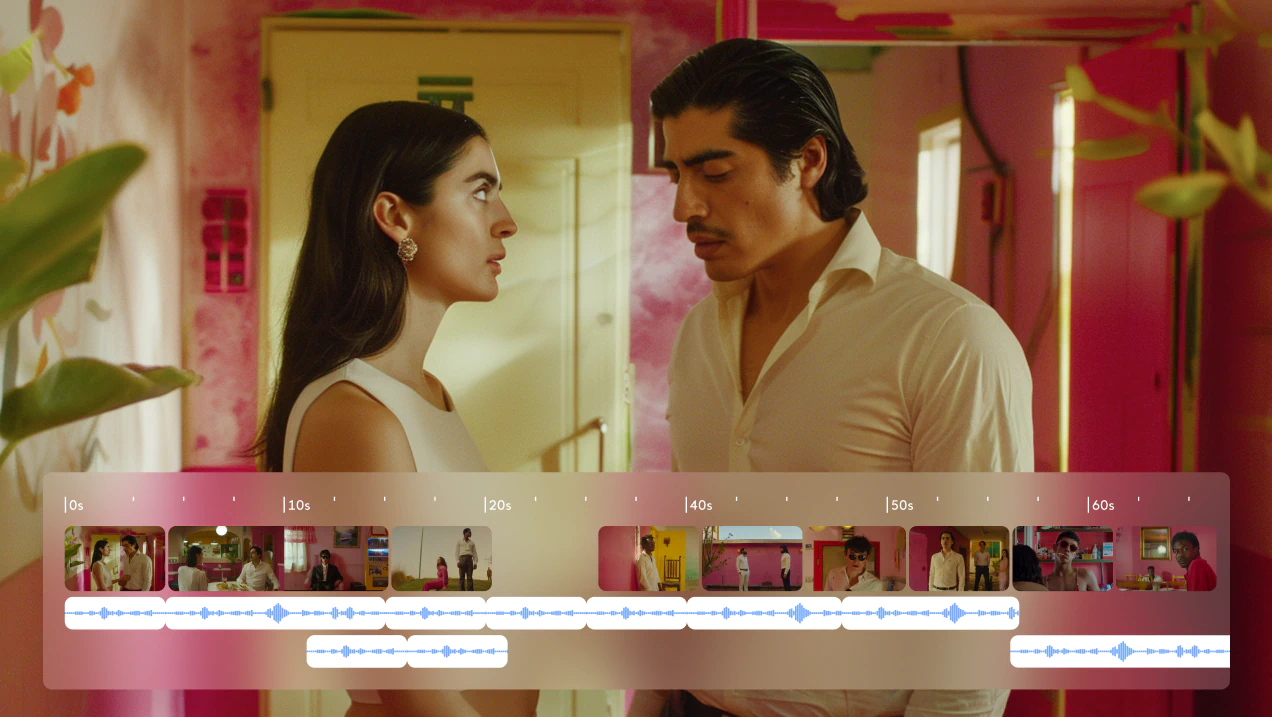

How the LTX Studio Pilot Is Built Differently

Most AI video tools leave pilot structure entirely to the customer. LTX Studio builds it into the program itself — a five-stage process that runs from contract signature to executive readout, with a dedicated CSM owning every step.

Here's what the program looks like in practice:

Stage 1 — Handover to CSM: Once the pilot is signed, your Account Executive passes the account to a dedicated Customer Success Manager. Goals, team composition, and use cases are agreed before the first session. You're not starting from zero at kickoff.

Stage 2 — Kickoff: Brand assets are reviewed. The Creative AI Strategy team begins building custom mockups in your visual language — not generic demo outputs, but content that looks like it came from your team. For enterprise deals, this has been a meaningful differentiator.

Stage 3 — Training: Weekly live sessions move your team from curious to producing real work, fast. Structured onboarding with screen sharing, prompting best practices, and hands-on walkthroughs built around your actual use cases — not a generic tutorial.

Stage 4 — Mid-Pilot Review: A structured checkpoint around day 14–21. Who's active, what's working, where there's friction. If adoption is below target, the CSM re-engages the team before time runs out. If certain use cases aren't delivering, focus narrows to what is.

Stage 5 — Summary Meeting: The pilot closes with an executive readout: outputs produced, usage data, performance against the metrics agreed at kickoff, and a clear path to the annual contract.

What the LTX Studio Pilot Includes

- Full LTX Studio Enterprise access for your team (seats defined upfront)

- A dedicated CSM through every stage of the program

- Custom brand mockups built by the Creative AI Strategy team in your visual identity

- Weekly live training sessions and structured onboarding

- Access to multiple AI models in one platform: LTX-2, Veo, Kling, Flux, and more

- SOC 2 compliance, IP ownership locked in upfront, zero model training on your data

- Executive Summary Report at close with outputs, usage data, and ROI indicators

The pilot runs at a one-time cost, fully credited toward your annual contract. No lock-in, no auto-renewal.

Who It's Built For

The program is designed for the people who own the decision and the people who live with it:

- CMOs building the internal case for AI adoption at scale

- VPs and Chief Creatives maintaining brand quality while accelerating output

- AI/Tech Directors evaluating security, IP, and integration requirements

- In-house brand studios that move faster than agencies and need a tool built for that pace

Unlike Runway, Sora, or Kling, LTX Studio is a full production suite — not just a model. The pilot is designed to prove that at the level your team actually operates, on the content you're actually shipping.

From Pilot to Production

A successful pilot doesn't end with a report — it ends with a decision. The teams that scale most effectively do three things:

- Expand from what worked. Start with the use cases that delivered measurable results. Roll out from there.

- Build internal champions. Your pilot champion becomes the first node in a network. Identify advocates in each team that will use the tool at scale.

- Integrate, don't replace. Map where AI generation fits in your existing production pipeline — from brief to delivery — and make that the standard operating procedure.

If your pilot demonstrates that LTX Studio can reduce production time, maintain quality standards, and drive real adoption across your creative team, you have the data to make that case to leadership. The pilot did its job.

.png)